How does Responsive Design affect conversion rate?

A/B testing something that we all take for granted

tl;dr

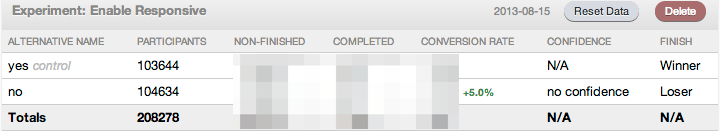

An A/B test revealed that our responsive site didn't perform any better (or worse) than our non-responsive one.

Background

Sometime in 2013, I was discussing Responsive Design with a few of the other Science-backed startup CTOs, and, for the sake of argument, asked if anyone had tested, or knew of a published test result, that showed that responsive ecommerce sites have better conversion rates. We all spend significant time and energy making sure that our designers and developers are always thinking about how the site will reformat for phones, tablets, and computers, but none of us had any evidence to show that effort is worthwhile.

Since none of us had the answer, I went to the place where I was sure I'd find one, Quora. Alas, none was to be had, so I posted a question, and waited...

The test

I decided that the only way to truly answer this question was to run the A/B test myself. Since our site is built on the Bootstrap 2.x grid, it was easy enough to enable/disable responsive by either including or excluding the Bootstrap responsive CSS. Using the split A/B testing gem for rack-based (read: Ruby on Rails) apps, the setup for this test was dead-simple. Since Cult Cosmetics is an ecommerce store, our metric was conversion to purchase (completed orders). We ran the test over the span of several months in late 2013.

Results

Sadly, the A/B test was inconclusive, showing a slight improvement with responsive disabled, although it was not a statistically significant difference. This is perhaps telling in and of itself, since I would have hoped to have seen a statistically significant result in favor of responsive design. Since we already had the responsive code built, and I prefer responsive myself, we now have responsive design enabled for all visitors. However, this makes me question whether, in the future, it is worth it to invest the time & effort to build responsive ecommerce sites.

Please note that +5.0% means a 5% relative improvement in conversion rate, not a 5% absolute improvement (ie: from 1% to 1.05%, not 1% to 6%). Also, this test was not specific to mobile/tablet visitors; it applied to all visitors. However, the test should not have made a noticeable difference to visitors on computers, so their conversion rate should have been the same in either scenario, and thus should not have skewed the results. Additionally, Google Analytics from the period of the A/B test shows that ~63% of our visits were on mobile devices/tablets, the rest computer (no, that is not a typo), so there was a noticeable difference for the majority of our visitors.

If you have any results of your own to add, please comment with a link to them!

Also posted on Quora (please upvote the question & answer if this was helpful)

You might also be interested in these articles...

Startup Founder | Engineering Leader with an MBA